数据操作

概念

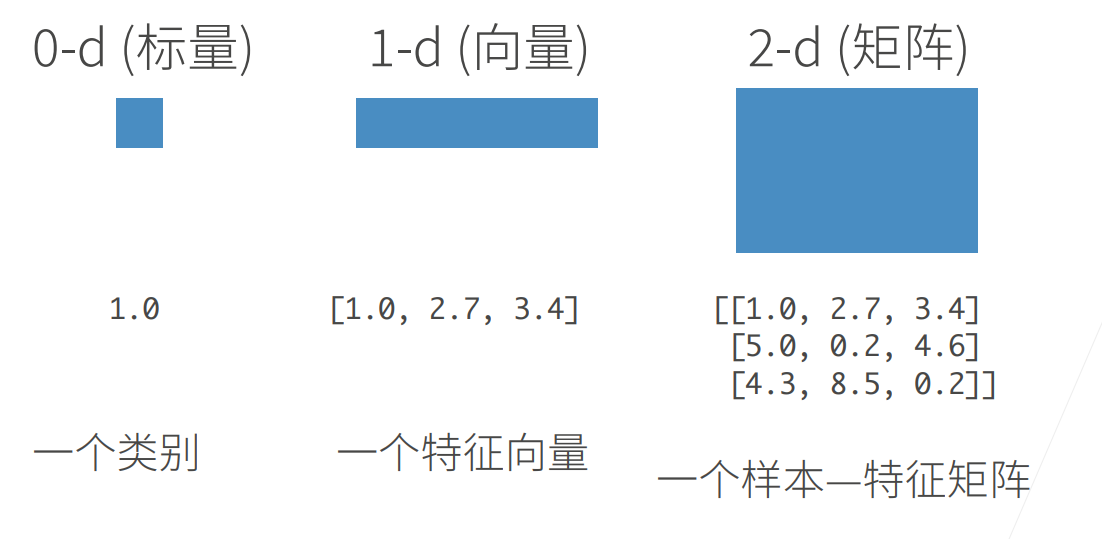

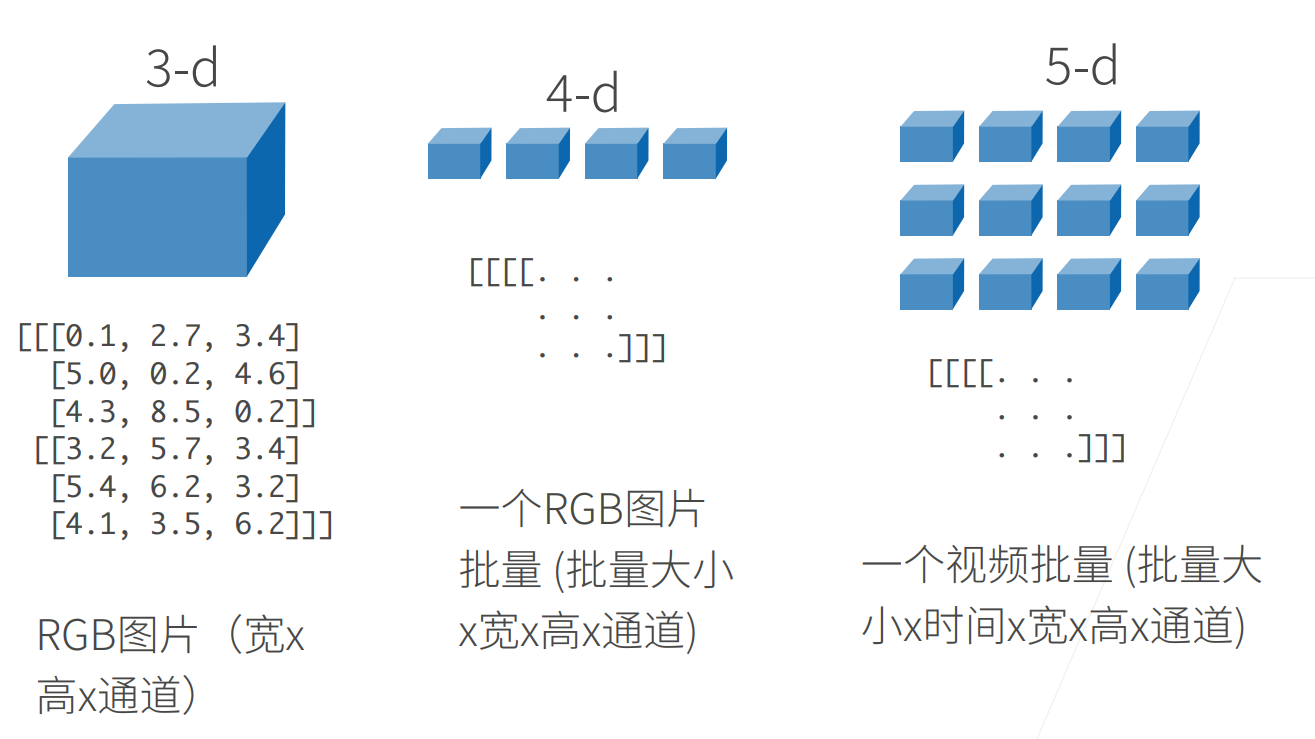

N维数组是机器学习和神经网络的主要数据结构,如下图所示:

常见操作

创建张量

张量的属性

实践

具体运行内容可见:

详情

<!-- #include-env-start: D:/code/klc/test/share4ai/docs/book/dive_into_on_dl/dl_basic -->

```python

import torch

scalar=torch.tensor(7)

scalar.ndim

0

scalar.item()

7

vector = torch.tensor([7, 7])

vector

tensor([7, 7])

# 维度 dimension

print(vector.ndim)

print(vector.dim())

1

1

vector.shape

torch.Size([2])

# Matrix

MATRIX = torch.tensor([[7, 8],

[9, 10]])

MATRIX.ndim,MATRIX.shape

(2, torch.Size([2, 2]))

# Tensor

TENSOR = torch.tensor([[[1, 2, 3],

[3, 6, 9],

[2, 4, 5]]])

TENSOR

tensor([[[1, 2, 3],

[3, 6, 9],

[2, 4, 5]]])

TENSOR.ndim

TENSOR.shape

torch.Size([1, 3, 3])

random_tensor = torch.rand(size=(3, 4))

random_tensor, random_tensor.dtype

(tensor([[0.9263, 0.1787, 0.2141, 0.0935],

[0.0975, 0.8770, 0.2748, 0.6494],

[0.9494, 0.6937, 0.3800, 0.3545]]),

torch.float32)

# Create a tensor of all zeros

zeros = torch.zeros(size=(3, 4))

zeros, zeros.dtype

(tensor([[0., 0., 0., 0.],

[0., 0., 0., 0.],

[0., 0., 0., 0.]]),

torch.float32)

# Create a tensor of all ones

ones = torch.ones(size=(3, 4))

ones, ones.dtype

(tensor([[1., 1., 1., 1.],

[1., 1., 1., 1.],

[1., 1., 1., 1.]]),

torch.float32)

# Create a range of values 0 to 10

zero_to_ten = torch.arange(start=0, end=10, step=1)

zero_to_ten

tensor([0, 1, 2, 3, 4, 5, 6, 7, 8, 9])

# Can also create a tensor of zeros similar to another tensor

ten_zeros = torch.zeros_like(input=zero_to_ten) # will have same shape

print(ten_zeros)

ten_ones = torch.ones_like(input=zero_to_ten) # will have same shape

print(ten_ones)

tensor([0, 0, 0, 0, 0, 0, 0, 0, 0, 0])

tensor([1, 1, 1, 1, 1, 1, 1, 1, 1, 1])

float_32_tensor = torch.tensor(data=[5,6,7],dtype=torch.float32)

float_32_tensor,float_32_tensor.dtype

(tensor([5., 6., 7.]), torch.float32)

# Create a tensor

some_tensor = torch.rand(3, 4)

# Find out details about it

print(some_tensor)

print(f"Shape of tensor: {some_tensor.shape}")

print(f"Datatype of tensor: {some_tensor.dtype}")

print(f"Device tensor is stored on: {some_tensor.device}") # will default to CPU

tensor([[0.1438, 0.8729, 0.1077, 0.5648],

[0.7661, 0.2413, 0.0707, 0.3947],

[0.8517, 0.7686, 0.3598, 0.2205]])

Shape of tensor: torch.Size([3, 4])

Datatype of tensor: torch.float32

Device tensor is stored on: cpu

# Manipulating tensors

# Create a tensor of values and add a number to it

tensor = torch.tensor([1, 2, 3])

print(tensor + 10)

# Subtract and reassign

print(tensor-10)

# Multiply it by 10

print(tensor * 10)

print(torch.multiply(tensor, 10))

# Division it by 10

print(tensor / 10)

tensor([11, 12, 13])

tensor([-9, -8, -7])

tensor([10, 20, 30])

tensor([10, 20, 30])

tensor([0.1000, 0.2000, 0.3000])

# Element-wise multiplication (each element multiplies its equivalent, index 0->0, 1->1, 2->2)

print(tensor, "*", tensor)

print("Equals:", tensor * tensor)

tensor([1, 2, 3]) * tensor([1, 2, 3])

Equals: tensor([1, 4, 9])

# Matrix multiplication

torch.matmul(tensor, tensor)

tensor(14)

# Shapes need to be in the right way

tensor_A = torch.tensor([[1, 2],

[3, 4],

[5, 6]], dtype=torch.float32)

tensor_B = torch.tensor([[7, 10],

[8, 11],

[9, 12]], dtype=torch.float32)

torch.matmul(tensor_A, tensor_B)

---------------------------------------------------------------------------

RuntimeError Traceback (most recent call last)

Cell In[49], line 10

2 tensor_A = torch.tensor([[1, 2],

3 [3, 4],

4 [5, 6]], dtype=torch.float32)

6 tensor_B = torch.tensor([[7, 10],

7 [8, 11],

8 [9, 12]], dtype=torch.float32)

---> 10 torch.matmul(tensor_A, tensor_B)

RuntimeError: mat1 and mat2 shapes cannot be multiplied (3x2 and 3x2)

# The operation works when tensor_B is transposed

print(f"Original shapes: tensor_A = {tensor_A.shape}, tensor_B = {tensor_B.shape}\n")

print(f"New shapes: tensor_A = {tensor_A.shape} (same as above), tensor_B.T = {tensor_B.T.shape}\n")

print(f"Multiplying: {tensor_A.shape} * {tensor_B.T.shape} <- inner dimensions match\n")

print("Output:\n")

output = torch.matmul(tensor_A, tensor_B.T)

print(output)

print(f"\nOutput shape: {output.shape}")

Original shapes: tensor_A = torch.Size([3, 2]), tensor_B = torch.Size([3, 2])

New shapes: tensor_A = torch.Size([3, 2]) (same as above), tensor_B.T = torch.Size([2, 3])

Multiplying: torch.Size([3, 2]) * torch.Size([2, 3]) <- inner dimensions match

Output:

tensor([[ 27., 30., 33.],

[ 61., 68., 75.],

[ 95., 106., 117.]])

Output shape: torch.Size([3, 3])

# Since the linear layer starts with a random weights matrix, let's make it reproducible (more on this later)

torch.manual_seed(42)

<torch._C.Generator at 0x1ddede26810>

# Since the linear layer starts with a random weights matrix, let's make it reproducible (more on this later)

torch.manual_seed(42)

# This uses matrix mutliplcation

# 输入样本的维度2,输出样本维度6

linear = torch.nn.Linear(in_features=2, # in_features = matches inner dimension of input

out_features=6) # out_features = describes outer value

x = tensor_A

print(f"Input tensor_A: {tensor_A}\n")

output = linear(x)

print(f"Input shape: {x.shape}\n")

print(f"Output:\n{output}\n\nOutput shape: {output.shape}")

Input tensor_A: tensor([[1., 2.],

[3., 4.],

[5., 6.]])

Input shape: torch.Size([3, 2])

Output:

tensor([[2.2368, 1.2292, 0.4714, 0.3864, 0.1309, 0.9838],

[4.4919, 2.1970, 0.4469, 0.5285, 0.3401, 2.4777],

[6.7469, 3.1648, 0.4224, 0.6705, 0.5493, 3.9716]],

grad_fn=<AddmmBackward0>)

Output shape: torch.Size([3, 6])

# min, max, mean, sum

x = torch.arange(0, 100, 10)

x

tensor([ 0, 10, 20, 30, 40, 50, 60, 70, 80, 90])

print(f"Minimum: {x.min()}")

print(f"Maximum: {x.max()}")

# print(f"Mean: {x.mean()}") # this will error

print(f"Mean: {x.type(torch.float32).mean()}") # won't work without float datatype

print(f"Sum: {x.sum()}")

Minimum: 0

Maximum: 90

Mean: 45.0

Sum: 450

# Positional min/max

# Create a tensor

tensor = torch.arange(10, 100, 10)

print(f"Tensor: {tensor}")

# Returns index of max and min values

print(f"Index where max value occurs: {tensor.argmax()}")

print(f"Index where min value occurs: {tensor.argmin()}")

Tensor: tensor([10, 20, 30, 40, 50, 60, 70, 80, 90])

Index where max value occurs: 8

Index where min value occurs: 0

# Create a tensor and check its datatype

tensor = torch.arange(10., 100., 10.)

print(f"tensor.dtype: {tensor.dtype}")

# Create a float16 tensor

tensor_float16 = tensor.type(torch.float16)

print(f"tensor_float16: {tensor_float16}")

# Create a int8 tensor

tensor_int8 = tensor.type(torch.int8)

print(f"tensor_int8: {tensor_int8}")

tensor.dtype: torch.float32

tensor_float16: tensor([10., 20., 30., 40., 50., 60., 70., 80., 90.], dtype=torch.float16)

tensor_int8: tensor([10, 20, 30, 40, 50, 60, 70, 80, 90], dtype=torch.int8)

# Reshaping, stacking, squeezing and unsqueezing

x = torch.arange(1., 8.)

print(x, x.shape)

# Add an extra dimension

x_reshaped = x.reshape(1, 7)

print(x_reshaped, x_reshaped.shape, x_reshaped.dim())

tensor([1., 2., 3., 4., 5., 6., 7.]) torch.Size([7])

tensor([[1., 2., 3., 4., 5., 6., 7.]]) torch.Size([1, 7]) 2

print(f"Previous tensor: {x_reshaped}")

print(f"Previous shape: {x_reshaped.shape}")

# Remove extra dimension from x_reshaped

x_squeezed = x_reshaped.squeeze()

print(f"\nNew tensor: {x_squeezed}")

print(f"New shape: {x_squeezed.shape}")

Previous tensor: tensor([[1., 2., 3., 4., 5., 6., 7.]])

Previous shape: torch.Size([1, 7])

New tensor: tensor([1., 2., 3., 4., 5., 6., 7.])

New shape: torch.Size([7])

print(f"Previous tensor: {x_squeezed}")

print(f"Previous shape: {x_squeezed.shape}")

## Add an extra dimension with unsqueeze

x_unsqueezed = x_squeezed.unsqueeze(dim=0)

print(f"\nNew tensor: {x_unsqueezed}")

print(f"New shape: {x_unsqueezed.shape}")

x_unsqueezed_1 = x_unsqueezed.unsqueeze(dim=0)

print(f"\nNew tensor: {x_unsqueezed_1}")

print(f"New shape: {x_unsqueezed_1.shape}")

Previous tensor: tensor([1., 2., 3., 4., 5., 6., 7.])

Previous shape: torch.Size([7])

New tensor: tensor([[1., 2., 3., 4., 5., 6., 7.]])

New shape: torch.Size([1, 7])

New tensor: tensor([[[1., 2., 3., 4., 5., 6., 7.]]])

New shape: torch.Size([1, 1, 7])

# Create tensor with specific shape

x_original = torch.rand(size=(2, 1, 3))

# Permute the original tensor to rearrange the axis order

x_permuted = x_original.permute(2, 0, 1) # shifts axis 0->1, 1->2, 2->0

print(f"Previous x_original: {x_original}, shape: {x_original.shape}")

print(f"New x_permuted: {x_permuted}, shape: {x_permuted.shape}")

Previous x_original: tensor([[[0.9862, 0.9704, 0.8391]],

[[0.0043, 0.9944, 0.0027]]]), shape: torch.Size([2, 1, 3])

New x_permuted: tensor([[[0.9862],

[0.0043]],

[[0.9704],

[0.9944]],

[[0.8391],

[0.0027]]]), shape: torch.Size([3, 2, 1])

# Create a tensor

import torch

x = torch.arange(1, 10).reshape(1, 3, 3)

print(x, x.shape)

# Let's index bracket by bracket

print(f"First square bracket:\n{x[0]}")

print(f"Second square bracket: {x[0][0]}")

print(f"Third square bracket: {x[0][0][0]}")

tensor([[[1, 2, 3],

[4, 5, 6],

[7, 8, 9]]]) torch.Size([1, 3, 3])

First square bracket:

tensor([[1, 2, 3],

[4, 5, 6],

[7, 8, 9]])

Second square bracket: tensor([1, 2, 3])

Third square bracket: 1

# Get all values of 0th dimension and the 0 index of 1st dimension

print(x[:, 0])

# Get all values of 0th & 1st dimensions but only index 1 of 2nd dimension

print(x[:, :, 1])

# Get all values of the 0 dimension but only the 1 index value of the 1st and 2nd dimension

print(x[:, 1, 1])

tensor([[1, 2, 3]])

tensor([[2, 5, 8]])

tensor([5])

# NumPy array to tensor

import torch

import numpy as np

array = np.arange(1.0, 8.0)

tensor = torch.from_numpy(array)

array, tensor

(array([1., 2., 3., 4., 5., 6., 7.]),

tensor([1., 2., 3., 4., 5., 6., 7.], dtype=torch.float64))

# Tensor to NumPy array

tensor = torch.ones(7) # create a tensor of ones with dtype=float32

numpy_tensor = tensor.numpy() # will be dtype=float32 unless changed

tensor, numpy_tensor

(tensor([1., 1., 1., 1., 1., 1., 1.]),

array([1., 1., 1., 1., 1., 1., 1.], dtype=float32))

# Create two random tensors

random_tensor_A = torch.rand(3, 4)

random_tensor_B = torch.rand(3, 4)

print(f"Tensor A:\n{random_tensor_A}\n")

print(f"Tensor B:\n{random_tensor_B}\n")

print(f"Does Tensor A equal Tensor B? (anywhere)")

random_tensor_A == random_tensor_B

Tensor A:

tensor([[0.5858, 0.0322, 0.1154, 0.9938],

[0.2363, 0.4307, 0.0557, 0.1701],

[0.9812, 0.2468, 0.0813, 0.7867]])

Tensor B:

tensor([[0.7819, 0.1164, 0.1922, 0.0532],

[0.3552, 0.0449, 0.3584, 0.3483],

[0.6862, 0.6083, 0.0900, 0.6828]])

Does Tensor A equal Tensor B? (anywhere)

tensor([[False, False, False, False],

[False, False, False, False],

[False, False, False, False]])

torch.cuda.is_available()

False